Professor Stephen Hawking

Angel Theory pt.1

M-Systems V4.09

Transcribed by Nick Ray Ball 8th May to 22nd Nov 2016

Index

- Stephen Hawking and Yuri Milner to announce space exploration initiative “Starshot”

- Information Preservation and Weather Forecasting for Black Holes.

- THE THEORY OF EVERYTHING by Stephen Hawking

- Hawking, The Theory of Everything (Including Black Hole particle theory)

- Hawking & Mlodinow – The Grand Design

- Uncertainty Principle, Determinism, Make Testable Theories by repeating an experiment many times…

- Inflation, Quantum Mechanics, General Relativity & the Warpage of Time creates Spacetime.

- M Theory by Stephen Hawking Part 1

- Dimensions & the laws of nature are extremely fine-tuned

- Spiritual Introduction to M Theory (pt. 2) and the fine tuning of the laws of nature.

- Energy a conserved quantity, Black Holes, Einstein & M-Theory (pt3)

- Pythagoras’s the farther of theoretical physics and his Theory of Strings

- Hawking’s mind of God – ‘I don’t believe that the ultimate theory will come by steady work along existing lines’

- What is Reality the Matrix, Galileo, and Model Dependent Realism

- Observation vs theory & Model dependent realism plus Gates Code & Holograms

- Economics is a ‘effective theory.’ Free will is not always rational

- Model dependent realism and a network of theories called M-Theory (pt.4)

- The Uncertainty Principle & QSF

- Scientists must accept theories that agree with experiment

- Atoms are a bit like people

- Alternative Histories – Edit for Angel Cities

- A Model is a good model if it…

1. Stephen Hawking and Yuri Milner to announce space exploration initiative “Starshot”

M-Systems 6, 12, 13, 14

8th May 2016

14.00 Steven Hawking

Good Afternoon, we are here today to talk about breakthrough ‘Starshot’ And our future in space.

What makes human beings unique? There are many theories. Some say it is language or tools, others say its logic or reasoning. They obviously have not met many humans.

I believe what makes us unique is transcending our limits.

Nick Ray’s Comment:

If this project costs $100,000,000 what could you do with a gazillion dollars? The thing about the economics of S-World is that when everyone is living in a mansion we need to find more things to build. And Mission Gliese (S-World UCS) is the most expensive thing we could imagine that made sense. So, if S-World boosts our economy to a quarter of a gazillion a year and half was sent on space exploration; we have a multi gazillion dollar project budget (US$ not ZIM$).

How’s that for transcending our limits !!

S-World: Powered by Angel Theory.

Stephen continues: (15.00)

Gravity pins us to the ground, but I just few to America. I lost my voice, but I can still speak thanks to my voice synthesiser. How do we transcend these limits? With our minds and our machines.

The limit that confronts us now is the great void between us and the stars, but now we can transcend it. With light beams, light sails, and the lightest spacecraft ever built, we can launch a mission to Alpha Centauri within a generation.

Today we commit to this great leap into the cosmos, because we are human and our nature is to fly.

Professor Dyson (Princeton) (18.00)

Atlantic style of exploring is like Columbus, an entrepreneurial adventure for the thrill of it.

Pacific Style is the Polynesians (a thousand years earlier) were exploring in small boats hopping from island to island in looking for a place to live.

Professor Dyson suggests we should first try Polynesians. But I bet that’s mostly due to practicality, i.e. there is no big entrepreneurial adventurer available yet.

Nick Ray Ball

We would like Professor Dyson and Ed Witten to change the syllabus at Princeton to assist S-World development.

Mae Jemison (31.42)

No one asks a child to look at the sky

34.00

Human travel space ship getting to another galaxy within 100 years

Professor Stephen Hawking (40.00)

7 Questions answered.

-

What could the development of new methods of propulsion mean for humankind’s future in space?

Without new methods of propulsion we simply cannot get very far. Not unless we are prepared to spend thousands of years in flight. Light driven Nano crafts are the most pragmatic technology available. Others like fusion or antimatter are a long way in the future

- In your estimations, what is the probability of finding intelligent alien life in the next 20 years and why?

The probability is low… Probably … Laughs from the audience.

But the discoveries of the Kepler mission suggest that there are billions of habitable planets in our galaxy alone. And there are at least 100 billion galaxies in the visible universe. So, it seems likely that there are others out there.

- If we find signs of intelligent alien life, what should we do next?

We should hope that they don’t find us!

-

Why should humankind aspire to reach another star system?

Firstly, because there are no heights to aspire to than the stars!

Secondly, it is not wise to keep all our eggs in one fragile basket. Life on earth faces dangers from astronomical events like asteroids and supernovas and other dangers from ourselves. If we are to survive as a specie, we must ultimately spread to the stars.

- Do you have any theories as to what intelligent life might look like?

Judging by the election campaign, definitely not like us.

-

What is Professor Hawking hoping to get out of this project?

Ultimately, a successful launch to Alpha Centauri within a generation

- What scientific finding of your life are you most proud of?

I would like to be remembered for my work on Hawking radiation and the entropy of black holes.

Nick Ray Ball

This last point of Hawking’s encouraged me to look at his work on Black Holes…

2. Information Preservation and Weather Forecasting for Black Holes.

M-Systems: 5, 8 & 15

8th May 2016

Nick Ray Ball: I note that whilst in the most part this is not something I can understand yet. I have managed to add point of interest at the end based on Chaos Theory which makes Hawking interesting for that subject.

At last after 5 years I found someone who is interested in Chaos Theory. We also note some links to String Theory. But I’m not really sure if Hawking is suggesting that a connection is a part of his solution or against his solution?

We will look at this more closely another day.

Indeed in time I hope to translate this for the masses, but first I have to translate it for myself.

It’s a process, read it, dream it, consider it, Google it, and go for a nice long walk… Continue until understood.

Hawking Radiation and Black Holes

What Stephen Hawking Really Said About Black Holes?

https://www.youtube.com/watch?v=L8GCR88T3fE

Information Preservation and Weather Forecasting for Black Holes

http://arxiv.org/abs/1401.5761

http://arxiv.org/pdf/1401.5761v1.pdf

Information Preservation and Weather Forecasting for Black Holes∗

S. W. Hawking1 1DAMTP, University of Cambridge, UK

Abstract: It has been suggested [1] that the resolution of the information paradox for evaporating black holes is that the holes are surrounded by firewalls, bolts of outgoing radiation that would destroy any in-falling observer. Such firewalls would break the CPT invariance of quantum gravity and seem to be ruled out on other grounds. A different resolution of the paradox is proposed, namely that gravitational collapse produces apparent horizons but no event horizons behind which information is lost. This proposal is supported by ADS-CFT and is the only resolution of the paradox compatible with CPT. The collapse to form a black hole will in general be chaotic and the dual CFT on the boundary of ADS will be turbulent. Thus, like weather forecasting on Earth, information will effectively be lost, although there would be no loss of unitarity.

Charge, Parity, and Time Reversal Symmetry

https://en.wikipedia.org/wiki/CPT_symmetry

Charge, Parity, and Time Reversal Symmetry is a fundamental symmetry of physical laws under the simultaneous transformations of charge conjugation (C), parity transformation (P), and time reversal (T). CPT is the only combination of C, P and T that is observed to be an exact symmetry of nature at the fundamental level.[1] The CPT theorem says that CPT symmetry holds for all physical phenomena, or more precisely, that any Lorentz invariant local quantum field theory with a HermitianHamiltonian must have CPT symmetry.

Parity Transformation

https://en.wikipedia.org/wiki/Parity_(physics)

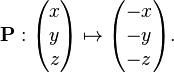

In quantum mechanics, a parity transformation (also called parity inversion) is the flip in the sign of one spatial coordinate. In three dimensions, it is also often described by the simultaneous flip in the sign of all three spatial coordinates (a point reflection):

Anti-de Sitter/conformal field theory correspondence

https://en.wikipedia.org/wiki/AdS/CFT_correspondence

In theoretical physics, the anti-de Sitter/conformal field theory correspondence, sometimes called Maldacena duality or gauge/gravity duality, is a conjectured relationship between two kinds of physical theories. On one side are anti-de Sitter spaces (AdS) which are used in theories of quantum gravity, formulated in terms of string theory or M-theory. On the other side of the correspondence are conformal field theories (CFT) which are quantum field theories, including theories similar to the Yang–Mills theories that describe elementary particles.

Unitarity

https://en.wikipedia.org/wiki/Unitarity_(physics)

In quantum physics, unitarity is a restriction on the allowed evolution of quantum systems that ensures the sum of probabilities of all possible outcomes of any event is always 1.

Hawking continues:

∗ Talk given at the fuzz or fire workshop, The Kavli Institute for Theoretical Physics, Santa Barbara, August 2013 1 arXiv:1401.5761v1 [hep-th] 22 Jan 2014

Some time ago [2] I wrote a paper that started a controversy that has lasted until the present day. In the paper, I pointed out that if there were an event horizon, the outgoing state would be mixed. If the black hole evaporated completely without leaving a remnant, as most people believe and would be required by CPT, one would have a transition from an initial pure state to a mixed final state and a loss of unitarity. On the other hand, the ADS-CFT correspondence indicates that the 7 evaporating black hole is dual to a unitary conformal field theory on the boundary of ADS. This is the information paradox.

Recently there has been renewed interest in the information paradox [1]. The authors of [1] suggested that the most conservative resolution of the information paradox would be that an in-falling observer would encounter a firewall of outgoing radiation at the horizon.

There are several objections to the firewall proposal. First, if the firewall were located at the event horizon, the position of the event horizon is not locally determined but is a function of the future of the space-time.

Another objection is that calculations of the regularized energy momentum tensor of matter fields are regular on the extended Schwarzschild background in the Hartle-Hawking state [3, 4]. The outgoing radiating Unruh state differs from the Hartle-Hawking state in that it has no incoming radiation at infinity. To get the energy momentum tensor in the Unruh state one therefore had to subtract the energy momentum tensor of the ingoing radiation from the energy momentum in the Hartle-Hawking state. The energy momentum tensor of the ingoing radiation is singular on the past horizon but is regular on the future horizon. Thus, the energy momentum tensor is regular on the horizon in the Unruh state. So, no firewalls.

For a third objection to firewalls I shall assume that if firewalls form around black holes in asymptotically flat space, then they should also form around black holes in asymptotically anti- de Sitter space for very small lambda. One would expect that quantum gravity should be CPT invariant. Consider a gedankenexperiment in which Lorentzian asymptotically anti-de Sitter space has matter fields excited in certain modes. This is like the old discussions of a black hole in a box [5]. Non-linearities in the coupled matter and gravitational field equations will lead to the formation of a black hole [6]. If the mass of the asymptotically anti-de Sitter space is above the Hawking-Page mass [7], a black hole with radiation will be the most common configuration. If the space is below that mass the most likely configuration is pure radiation.

Gedankenexperiment

http://www.britannica.com/topic/Gedankenexperiment

Gedankenexperiment, ( German: “thought experiment”) term used by German-born physicist Albert Einstein to describe his unique approach of using conceptual rather than actual experiments in creating the theory of relativity.

http://arxiv.org/abs/1102.5012

Asymptotically Safe Lorentzian Gravity

Elisa Manrique, Stefan Rechenberger, Frank Saueressig

(Submitted on 24 Feb 2011)

The gravitational asymptotic safety program strives for a consistent and predictive quantum theory of gravity based on a non-trivial ultraviolet fixed point of the renormalization group (RG) flow. We investigate this scenario by employing a novel functional renormalization group equation which takes the causal structure of space-time into account and connects the RG flows for Euclidean and Lorentzian signature by a Wick-rotation. Within the Einstein-Hilbert approximation, the β-functions of both signatures exhibit ultraviolet fixed points in agreement with asymptotic safety. Surprisingly, the two fixed points have strikingly similar characteristics, suggesting that Euclidean and Lorentzian quantum gravity belong to the same universality class at high energies.

Anti-de Sitter space

https://en.wikipedia.org/wiki/Anti-de_Sitter_space

“AdS” redirects here. For other uses, see ADS (disambiguation).

In mathematics and physics, n-dimensional anti-de Sitter space (AdSn) is a maximally symmetric Lorentzian manifold with constant negative scalar curvature. It is the Lorentzian analogue of hyperbolic space, just as Minkowski space is the analogue of Euclidean space and de Sitter space is the analogue of elliptical space.

It is best known for its role in the AdS/CFT correspondence. The anti-de Sitter space and de Sitter space are named after Willem de Sitter (1872–1934), professor of astronomy at Leiden University and director of the Leiden Observatory. Willem de Sitter and Albert Einstein worked together closely in the 1920s in Leiden on the space-time structure of the universe.

In the language of general relativity, anti-de Sitter space is a maximally symmetric vacuum solution of Einstein’s field equation with a negative (attractive) cosmological constant , corresponding to a negative energy density and positive pressure of the vacuum.

In mathematics, anti-de Sitter space is sometimes defined more generally as a space of arbitrary metric signature (p, q). In physics, often only the case of one time like dimension is considered. This corresponds to the equivalent metric signatures (n−1, 1) and (1, n−1), where the choice is by a sign convention.

Hawking Continues…

Whether or not the mass of the anti-de Sitter space is above the Hawking-Page mass, the space will occasionally change to the other configuration. That is the black hole above the Hawking-Page mass will occasionally evaporate to pure radiation, or pure radiation will condense into a black hole. By CPT the time reverse will be the CP conjugate. This shows that in this situation, the evaporation of a black hole is the time reverse of its formation (modulo CP), though the conventional descriptions are very different. Thus, if one assume quantum gravity is CPT invariant, one rules out remnants, event horizons, and firewalls.

Further evidence against firewalls comes from considering asymptotically anti-de Sitter to the metrics that fit in an S1 cross S2 boundary at infinity. There are two such metrics: periodically identified anti-de Sitter space and Schwarzschild anti-de Sitter. Only periodically identified anti-de Sitter space contributes to the boundary to boundary correlation functions because the correlation functions from the Schwarzschild anti-de Sitter metric decay exponentially with real time [8, 9]. I take this as indicating that the topologically trivial periodically identified anti-de Sitter metric is the metric that interpolates between collapse to a black hole and evaporation. There would be no event horizons and no firewalls.

The absence of event horizons mean that there are no black holes – in the sense of regimes from which light can’t escape to infinity. There are however apparent horizons which persist for a period of time. This suggests that black holes should be redefined as metastable bound states of the gravitational field. It will also mean that the CFT on the boundary of anti-de Sitter space will be dual to the whole anti-de Sitter space, and not merely the region outside the horizon.

The no hair theorems imply that in a gravitational collapse the space outside the event horizon will approach the metric of a Kerr solution.

However, inside the event horizon, the metric and matter fields will be classically chaotic. It is the approximation of this chaotic metric by a smooth Kerr metric that is responsible for the information loss in gravitational collapse. The chaotic collapsed object will radiate deterministically but chaotically. It will be like weather forecasting on Earth that is unitary but chaotic, so there is effective information loss. One can’t predict the weather more than a few days in advance.

(Nick Ray Ball: But you can put into events a series of events that changes the weather in the long term, see Special Project: African Rain. )

[1] A. Almheiri, D. Marolf, J. Polchinski, J. Sully, Black Holes: Complementarity or Firewalls?, J. High Energy Phys. 2, 062 (2013)

[2] S. W. Hawking, Breakdown of Predicatability in Gravitational Collapse, Phys. Rev. D 14, 2460 (1976)

[3] M. S. Fawcett, The Energy-Momentum Tensor near a Black Hole Commun. Math. Phys. 89, 103-115 (1983)

[4] K. W. Howard, P. Candelas, Quantum Stress Tensor in Schwarzschild Space-Time, Physical Review Letters 53, 5 (1984)

[5] S. W. Hawking, Black holes and Thermodynamics, Phys. Rev. D 13, 2 (1976)

[6] P. Bizon, A. Rostworowski, Weakly Turbulent Instability of Anti-de Sitter Space, Phys. Rev. Lett. 107, 031102 (2011)

[7] S. W. Hawking, D. N. Page, Thermodynamics of Black Holes in Anti-de Sitter Space, Commun. Math. Phys. 87, 577-588 (1983)

[8] J. Maldacena, Eternal black holes in anti-de Sitter, J. High Energy Phys. 04, 21 (2003) [9] S. W. Hawking, Information Loss in Black Holes, Phys. Rev. D 72, 084013

3. THE THEORY OF EVERYTHING by Stephen Hawking

M-Systems: 5, 9, 15 & 16

8th May 2016

http://www.crowhealingnetwork.net/pdf/Stephen%20Hawking%20-%20Theory%20of%20Everything.pdf

The following is a summary of Stephen Hawking’s talk as printed by The Bulletin of the University of Toronto.

On April 29, 1980, I gave my inaugural lecture as the Lucasian Professor of mathematics at Cambridge. My title was, Is the End in Sight for Theoretical Physics? I described the progress we had already made in the last hundred years in understanding the universe and asked what the chances were that we would find a complete unified theory of everything by the end of the century. Well, the end of the century is almost here. Although we have come a long way, particularly in the last three years, it doesn’t look as if we are going to quite make it.

In my 1980 lecture, I described how we had broken down the problem of finding a theory of everything into a number of more manageable parts. First of all, we had divided the description of the universe around us into two parts.

One part is a set of local laws that tell us how each region of the universe evolves in time, if we know its initial state, and how it is affected by other regions. The other part is a set of what are called boundary conditions. These specify what happens at the edge of space and time. They determine how the universe begins and, maybe, how it ends.

Many people, including probably a majority of physicists, feel that the task of theoretical physics should be confined to the first part, that of formulating local laws that describe how the universe evolves in time. They would regard the question of how the initial state is determined as being beyond the scope of physics and belonging to the realms of metaphysics or religion.

But I’m an unashamed rationalist. In my opinion the boundary conditions of the universe that determine its initial state are as legitimate a matter for scientific inquiry as are the laws that govern how it evolves.

In the early 1960s the forces that were known to physics were classified into four categories that seemed to be separate and independent of each other. The first of the four categories was the gravitational force, which is carried by a particle called the graviton. Gravity is by far the weakest of the four forces. However, it makes up for its low strength by having two important properties. The first is that it is universal. That is, it affects every particle in the universe in the same way. All bodies are attracted to each other. None are unaffected or repelled by gravity.

The second important property of the gravitational force is that it can operate over long distances. Together, these two properties mean that the gravitational forces between the particles in a large body all add up and can dominate over all other forces.

The second of the four categories into which the forces were divided is the electromagnetic force, which is carried by a particle called the photon. Electromagnetism is a million billion, billion, billion, billion times more powerful than the gravitational force, and like gravity, it can act over great distances. However, unlike gravity, it does not act on all particles in the same way. Some particles are attracted, some are unaffected and some are repelled.

The attractions and repulsions between the particles in two large bodies will cancel each out almost exactly, unlike the gravitational forces between the particles, which will all be attractive. That is why one falls towards the Earth, and not towards a television set. On the other hand, on the scale of molecules and atoms, with only a relatively small number of particles, electromagnetic forces dominate gravitational forces utterly. On the even smaller scale of the nucleus of an atom, a trillionth of a centimetre, the third and fourth categories, the weak and strong nuclear forces, dominate other forces.

Gravity and electromagnetism are described by what are called field theories, in which there are a set of numbers at each point of space and time that determine the gravitational or electromagnetic forces.

When I began research in 1962, it was generally believed that the weak and strong nuclear forces could not be described by a field theory. But reports of the death of field theory proved to be an exaggeration. A new type of field theory was put forward by Chen Ning Yang and Robert Mills. In 1967 Abdus Salam and Steven Weinberg showed that a theory of this type could not only describe the weak nuclear forces but could also unify them with the electromagnetic force. I remember this field theory being treated with great scorn by most particle physicists. However, it agreed so well with experiments that the 1979 Nobel Prize was awarded to Salam, Weinberg and Glashow, who had proposed similar unified theories. The Nobel committee took quite a gamble because the final confirmation of the theory didn’t come until 1983, with the discovery of the W and Z particles. (That is to say, the W and Zed particles, for those of us who are British and don’t use an American speech synthesizer.)

The success sparked a search for a single “grand unified” Yang-Mills theory that would describe all three kinds of force. Grand unified theories are not very satisfactory. Indeed, their name is rather an exaggeration. They are not that grand as theories because they contain about 40 numbers that cannot be predicted in advance but had to be adjusted to agree with experiments. One would hope the ultimate theory of the universe is unique and does not contain any adjustable quantities. How would those values have been chosen?

But the most powerful objection to the grand unified theories was that they weren’t fully unified. They didn’t include gravity and there wasn’t any obvious way of extending them so that they did.

It may be that there is no single fundamental theory. Instead there may be a collection of apparently different theories, each of which works well in certain situations. Different theories agree with each other where their regions of validity overlap. Thus, they can all be regarded as different aspects of the same theory. But there may be no single formulation of the theory that can be applied in all situations.

Theoretical physics may be like mapping the Earth. One can accurately represent a small region of the Earth’s surface, as a map on a sheet of paper. But if one tries to map a larger region, one gets distortions because of the curvature of the Earth. It is not possible to represent every point on the Earth’s surface on a single map. Instead one uses a collection of maps, which agree in the regions where they overlap.

As I said, even if we find a complete unified theory, either in a single formulation, or as a series of overlapping theories, we will have solved only half the problem. The unified theory will tell us how the universe evolves in time, given the initial state. But the theory does not in itself specify the boundary conditions at the edge of space and time that determine the initial state. This question is fundamental to cosmology.

We can observe the present state of the universe and we can use the laws of physics to calculate what it must have been at earlier times. But all that tells us is that the universe is as it is now because it was as it was then. We cannot understand why the universe is the way it is unless cosmology becomes a science, in the sense it can make predictions. And that requires a theory of the boundary conditions of the universe.

There have been various suggestions for the initial conditions of the universe, such as the tunnelling hypothesis and the so-called pre-big bang scenario. But in my opinion by far the most elegant is what Jim Hartle and I called the no-boundary proposal. This can be paraphrased as the boundary condition of the universe is that it has no boundary. In other words, space and imaginary time together are curved back on themselves to form a closed surface like the surface of the Earth but with more dimensions. The surface of the Earth has no boundary, either. There are no reliable reports of someone falling over the edge of the world.

The no-boundary condition and the other theories are just proposals for the boundary conditions of the universe. To test them we have to calculate what predictions they make and compare them with the new observations that are coming in. At the moment, the observations are not good enough to distinguish between these different kinds of maps. But new observations in the next few years may settle the question. This is an exciting time in cosmology.

My money is on the no-boundary condition. It is such an elegant explanation, I’m sure God would have chosen it.

The progress that has been made in unifying gravity with the other forces has been entirely theoretical. This has led to charges from people like John Horgan that physics is dead because it has become just a mathematical game, not related to experiment. But I don’t agree.

Although we can’t produce particles of the Planck energy — the energy at which gravity would be unified with other forces — there are predictions that can be tested at lower energies. The Superconducting Super Collider that was being built in Texas would have reached these energies but it was cancelled when the U.S. went through a fit of feeling poor. So, we shall have to wait for the Large Hadron Collider that is being built in Geneva.

Assuming that the Geneva experiments confirm current theory, what are the prospects for a complete unified theory? In 1980 I said I thought there was a 50-50 chance of us finding a complete unified theory in the next 20 years. That is still my estimate, but the 20 years begins now. I will be back in another 20 years to tell you if we made it.

Professor Stephen Hawking of the University of Cambridge spoke to a sell-out crowd at Convocation Hall April 27, 1998. The event was sponsored by the Global Knowledge Foundation.

4. Hawking, The Theory of Everything (Including Black Hole Particle Theory)

M-Systems: 1, 5, 9, 10, 15

Transcribed and commented upon by Nick Ray Ball 13th May 2016

Part 1

27.25 Hawking:

At the very tiny level, our universe is like a crazy dance of waves, dangling through a myriad of beats. Particles appear and disappear at random, at this level, nothing is certain, not even existence.’

Narrator: Atoms are a bit like people, they are very hard to predict with absolute certainty. They do roughly what you would expect of them, but never exactly.

Nick Ray Ball>

http://www.theguardian.com/small-business-network/2014/jul/08/theo-paphitis-startups-dragons-den Says 50% of new business fail in the first couple of years and http://www.forbes.com/sites/ericwagner/2013/09/12/five-reasons-8-out-of-10-businesses-fail/#35b11bd95e3c says 8 out of 10 business fail.

(Business are more like QM than people)

Also we got the ‘E’ in the ‘RES>100% equation from considering how to avoid economic black holes on the 15th March 2012 http://www.s-world.biz/TST/The_Black_Hole.htm

Isaac Asimov: ” You may not predict what an individual may do, but you can put in motion, things that will move the masses in a direction that is desired. Thus, shaping if not predicting the future.”

Professor Hawking continues…

With Atoms it’s hard to be totally certain if they exist or not, sometimes atoms pop up when there should be absolutely nothing around (NRB immaculate conception?). In fact, out in space it happens all the time.

Space the void itself cannot have absolutely zero energy.

At an atomic level, there is always a tiny, tiny bit of activity going on (NRB > Add 1 penny to every cube in the global network cube). So, Hawking asked ‘What happens when the perfectly smooth spear of a black hole meets this microscopic energy field of space?’

In empty space this energy takes the form of pairs of subatomic pairs of particles, that emerge out of the void, exist for less than a Nano-second and then annihilate each other (If we sped up time, this could describe some people).

Professor Bernard Carr: So, the idea is, that out of nothing if you like, a pair of particles are created and then exist for a short time and then annihilate. And that is happening throughout space. So, it’s happening there, there and there.

Presenter: This pair of particles is like yin and yang opposites that would not exist without each other. One has positive mass and the other, strangely has negative mass. So, Hawking asked: ‘What would happen if that negative mass particle bumped up against a black hole?’

Hawking realised that the positive particle would have just enough energy to escape the black hole but the particle with negative mass would fall in and it would do something extraordinary to the black hole.

The particle that goes inside of the black hole eventually decreases the mass of the black hole as it effectively has negative mass. But the particle that goes off to the distant observer, is then observed as part of radiation.

Hawking: ‘Although we now understand black holes have to give off thermal radiation, it came as a complete surprise at the time. At first I thought I must have made a mistake.’

35;20 Hawking:

By applying quantum mechanics to black holes in the 1970s I was able to revolutionise our understanding of them. To expand our understanding further we need to bring quantum mechanics into the heart of the black hole.

Presenter: Could Hawking use the theory of the tiny to describe the birth of our entire universe?

…

Hawking. ‘If we want to know what happened when the universe began we have to have a theory that can describe gravity on the very small scale.’

Presenter: It is the roughness at the moment of creation that gave rise to the birth of suns and galaxies. As hawking predicted, we live due to the imperfections of creation.’

Nick Ray Ball:

POP is like GR, but like our universe is made up of 70% Dark Energy. There is a lot more to the S-World economy than just the GR cubes. The overflow of 1 string in POP could be many times the POP event horizon.

Nick Ray Ball:

Butterfly Effect is a Theory of Everything. To measure it, you need a GR grid from the point of flap to hurricane, but you need QM to measure the changes in the grids.

5. Hawking – The Grand Design

By Stephen Hawking & Leonard Mlodinow

M-System 1, 2, 3, 4, 5, 6, 9, 10, 11, 12, 13, 14, 15, 16

14th May 2016

CD 1 – Part 6

1643 to 1727: Newton was the person who won widespread acceptance for the modern concept of a scientific law. With his three laws of motion and his law of gravity which accounted for orbits of the earth, moon and planets and explained phenomena such as the tides. The handful of equation that he created and the elaborate mathematical framework we have since derived from them are still thought today and employed whenever an architect designs a building and engineer designs a car, or a physicist calculates how to aim a rocket meant to land on Mars.

As the poet Alexandra Pope says, “Nature and Natures laws lay hid in night. God said,

‘let Newton be!’ and all was light.”

Today most scientists would say a law of nature is a rule that is based upon an observed regularity and provides predictions that go beyond the immediate situations upon which it is based.

For example, you might notice that the sun has risen in the east all our lives and postulate the law, ‘The sun always rises in the east.’ This is a generalisation that goes beyond our limited observations of the rising sun and makes testable predictions about the future.

Part 6 or 7 | F or G: 6.22

Most laws of nature exist as a part of a larger interconnected system of laws. In modern science laws of nature are usually phrased in mathematics. They can either be exact or approximate but they must have been observed to hold without exception, if not universally then at least under a stimulated set of conditions.

For example, we now know that Newton’s laws must be modified if moving at velocities close to the speed of light. Yet we still consider Newton’s Laws because they hold, at least to a very good approximation for the conditions of the everyday world, in which the speeds we encounter are far below the speed of light.

If Nature is governed by laws, then these 3 questions arise.

- What is the origin of the laws?

- Are there any exceptions to the laws? i.e. miracles

- Is their only one set of possible laws?

8H: 1.27

It is difficult to imagine how free will can operate if our behaviour is determined by physical law, so it seems that we are no more than biological machines and that free will is just an illusion.

While conceding that human behaviour is indeed determined by the laws of nature, it also seems reasonable to conclude that the outcome is determined in such a complicated way and with so many variables as to make it impossible in practice to predict.

For that, one would need a knowledge of the initial state of all the thousand, trillion, trillion molecules in the human body, and to solve something like that number of equations. That would take a few billion years, which would be a little late to duck when the person opposite aimed a blow.

Because it is so impractical to use the underlying physical laws to predict human behaviour, we adopt what is called an ‘effective theory.’ In physics an effective theory is framework created to model certain observed phenomena without describing in detail all underlying processes.

For example, we cannot solve exactly the equations governing the gravitational interactions of every atom in a person body, with every atom in the earth. But for all practice purposes the gravitational force between a person and the earth can be described in terms of just a few numbers, such as the person’s total mass.

Similarly, we cannot solve the equations governing the behaviour of complex atoms and molecules, but we have developed an effective theory called chemistry, that provides an adequate explanation of how atoms and molecules behave in chemical reactions, without accounting for every detail of the interactions.

In the case of people, since we cannot solve the equations that determine our behaviour, we use the ‘effective theory’ that people have free will. The study of our will and of the behaviour that arises from it is the science of psychology.

Economics is also an effective theory, based on the notion of free will plus the assumptions that people evaluate their possible courses of actions and choose the best. That effective theory is only moderately successful in predicting behaviour, because, as we all know decisions are often not rational or are based on a defective analysis of the consequence of the choice. That is why the world is in such a mess!

Nick Ray Ball…

” You may not predict what an individual may do, but you can put in motion, things that will move the masses in a direction that is desired. Thus, shaping if not predicting the future.”

CD H: 3.18

Chapter 4

22. A model is a good model if it:

M-Systems 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16

17th June 2016

- Is Elegant

Elegance for example is not something easily measured, but it is highly prised amongst scientist. Because laws of nature are meant to economically compress a number in to one simple formula.

Elegance refers to the form of a theory, but is closely related to a lack of adjustable element, since a theory jammed with fudge factors is not very elegant. To Paraphrase Einstein ‘A theory should be as simple as possible, buy not simpler!’ - Contains few or arbitrary elements

- Agrees with and explains all existing observations

- Makes detailed predictions about future observation that can disproved or falsify the model if they were not born out

Other good points Index…

Chapter 1.

2 mins Model Dependencies *****

12 mins Pythagoras = Fist string theory ***

26 Initial conditions **

33 Newton’s laws ***

35 Determinism La Plass

37 Chapter 2 economics

Chapter 3 + 8 mins

Model Dependent Realism

Chapter 3 + 12 min – 2 examples, two different models, choose the best

40 ish – Voyager and gates simulations

Chapter 3 near beginning, S-World Voyager

Chapter 4 Alternative Histories

6. Uncertainty Principle, Determinism, Make Testable Theories by repeating an experiment many times…

M-System 1, 4, 11, 12, 13, 14

18th May 2016

Pt 2.

In general, the larger the object, the less apparent and robust are the quantum effects.

>

3.34mins > If individual particles interfere with themselves, the wave nature of light is the property not just of the beam or a large collection of photons, but of the individual particles.

Another of the main tenants of quantum physics is the uncertainty principle, formulated by Werner Heisenberg in 1926.

The uncertainty principle tells us that there are limits to our ability to simultaneously measure certain data, such as the position and velocity of a particle.

According to the uncertainly principle, for example: If you multiply the uncertainty and the position of a particle by the uncertainty in its momentum (its mass times velocity) the result can never be smaller than a certain fixed quantity, called Planks constant. That’s a tongue twister but its gist can be stated plainly: The more precisely you measure speed, the less precisely you can measure position.

Nick Ray Ball > Consider…

Read Amanda Stretch Notes

Then read ‘The PQS & QuESC Notes’ for the next 3 paragraphs

In professor Stephen Hawking’s 2010 ‘The Grand Design’ chapter 4 (pt3) it says…

‘Quantum Physics may seem to undermine that nature is governed by laws, but that is not the case.

Instead it leads us to accept a new form of determinism: Given the state of a system at some time the laws of nature determine the probabilities of various futures and pasts, rather than determining the future and past with certainty.

Though that is distasteful to some, scientist must accept theories that agree with experiment, not their own preconceived notions.

What science does demand of a theory is that it be testable, if the probabilistic nature of the predictions of quantum physics meant it was impossible to confirm those predictions, then quantum theories would not qualify as valid theories, but despite the probabilistic nature of their predictions, we can still test quantum theories.

For instance, we can repeat an experiment many times and confirm that the frequency of various outcomes conforms to the probabilities predicted.

7. Inflation, quantum mechanics, general relativity & the warpage of time creates space-time.

M-Systems (POP) 5, 9, 15

25th May 2016

A few words about inflation, unless you have lived in Zimbabwe were inflation reached 200 million percent, the term may not sound very explosive. But according to even conservative estimates, during this cosmological inflation the universe expended by a factor of 1,000,000,000,000,000,000,000,000,000,000 (30 zeros), during a period of 0.00000000000000000000000000000000001 (34 zeros) second.

If it was in a coin 1 cm in diameter, it suddenly blew up to 10 million times the width of the milky way. That may seem to defy relativity, which dictates that nothing can move faster than light, but that speed limit does not apply to the expansion of space itself.

This expansion is far more extreme that the expansion predicted in the traditional Big Bang Theory of general relativity during the time interval in which inflation occurred. The problem is for our theoretical model of inflation to work. The initial state of the universe had to be set up in a very special and highly improbable way.

Since we cannot describe creation employing Einstein’s theory of general relativity, if we want to describe the origin of the universe, general relativity needs to be replaced by a more complete theory. Because general relativity does not take into account the small-scale structure of matter which is governed by quantum theory.

For most practical purposes, quantum theory does not hold much relevance for the study of the large-scale structure of the universe because quantum theory applied to the description of nature is at microscopic scales. But if you go far enough back in time, the universe was as small as the planck size, a billionth, trillionth, trillionth of a centimetre. Which is the scale at which quantum theory does have to be taken into account. If we want to understand the origin of the universe we must combine what we know about general relativity with quantum theory.

To see how this works, we need to understand the principle that gravity warps space and time. Warpage of space is easier to explain than warpage of time. Imagine an ant at the edge of a circle, wishing to cross from side A to B where b is the opposite end of the circle. If the surface from A to B was flat, the quickest route is a straight line. But if there was a dent between points A and B the distance the ant travels from one side to the other is further. And if the dent (curvature) was deep enough, the ant would find that the shortest distance from A to B is not in a straight line, rather the shortest distance is around the edge of the circle.

The same is true of warpage in our universe, it stretches or compress the distance been points of space. Changing its geometry or shape in a way that is measurable from within the universe. Warpage of time stretches or compresses time intervals in an analogous manner.

Time and space can become intertwined, once we add the effects of quantum theory to general relativity, in extreme cases, such as the Big Bang or black holes, warping of time can occur to such a great extent that time behaves like another dimeson of space.

In the early universe, when the universe was small enough to be governed by both general relativity and quantum theory, there were effectively four dimensions of space and none of time.

That means that when we speak of the beginning of the universe we are skirting the subtle issue that as we look backward towards the very early universe, time as we know it does not exist. We must accept that our usual ideas of space and time to not apply to the very early universe. That it is beyond our experience, but not beyond our imagination or mathematics.’

8. M Theory by Stephen Hawking Part 1

M-Systems 9, 16

Transcribed by Nick Ray Ball 26th May 2016

The Grand Design Chapter 1

An edit of the final chapter of ‘The Grand Design’ written by professor Stephen Hawking in 2010

Because there is a law like gravity the universe can and will create itself from nothing. Spontaneous creation is the reason there is something rather than nothing, why the universe exists and why we exist.

M-Theory is the most general supersymmetric theory of gravity, for this reasons M-Theory is the only candidate for a complete theory of the universe. M-Theory is the unified theory Einstein was hoping to find.

If the theory is confirmed by observation, it will be the successful conclusion of a search going back more than 3,000 years. We will have found the Grand Design, The Theory of Everything.

9. Dimensions & the laws of nature are extremely fine-tuned

M-Systems (POP) 5,9,15&http://www.angeltheory.org/m-systems/system16/angelverses-super-summary

28th May 2016

Chapter 7 part 4

If one assumes that a few hundred million years in stable orbit are necessary for planetary life to evolve, the number of space dimensions is also fixed by our existence. That is because according to the laws of gravity, it is only in 3 dimensions that stable elliptical orbits are possible. Circular orbits are possible in other dimensions but those as Newton feared are unstable.

In any but 3 dimensions even a small disturbance, such as that produced by the pull of the other planets, would send a planet off its circular orbit and cause it to spiral either into or away from the sun, so we would either burn up or freeze. Also, in more than 3 dimensions the gravitational force between two bodies would decrease more rapidly than it does in 3 dimensions.

In 3 dimensions the gravitational force drops to 1/4 of its value if one doubles the distance, in 4 dimensions it would drop to 1/8, in 5 dimensions it would drop to 1/6 and so on.

As a result, in more than 3 dimensions the sun would not be able to exist in its stable state, with its internal pressure balancing the pull of gravity. It would either fall apart or collapse to form a black hole, either of which would ruin your day.

On the atomic scale the electrical forces would behave in the same way as gravitational forces, that means the electrons in atoms would either escape or spiral into the nucleus. In neither case would atoms as we know them be possible.

The emergence of the complex structures capable of creating intelligent observers seems very fragile, the laws of nature form a system that is extremely fine-tuned and very little in physical law can be altered without destroying the possibility of the development of life as we know it.

Were it not for a series of startling coincidences in the precise details of physical law, it seems humans and similar life forms would never have come into being.

10. Spiritual Introduction to M Theory (pt. 2) and the fine tuning of the laws of nature.

The Grand Design

M-Systems 1, 2, 3, 4, 5, 9, 10, 11, 12, 13, 14, 15, 16

30th May 2016

Chapter 7 part 5

In Western culture the old testament contains the idea of providential design it its story of creation, but the traditional Christian view point was greatly influenced by Aristotle, who believed in an intelligent natural world that functions according to some deliberate design. The medieval Christian philosopher Thomas Aquinas employed Aristotle’s ideas about the order in nature to argue the existence of God.

A more modem illustration of the Christian view was given a few years ago when Cardinal Christoph Schönborn Arch Bishop of Vienna wrote: Now at the beginning of the 21st Century, faced with scientific clams like Neo-Darwinism and the Multiverse (many universes) hypothesis in cosmology, invented to avoid the overwhelming evidence for purpose and design found in modem science, the Catholic church will again defend human nature, but proclaiming that the imminent design of nature is real.

But the discovery relatively recently of the extreme fine-tuning of so many the laws of nature, could lead at least some of us back to the old idea that this grand design is the work of some grand designer.

In the United States because the constitution prohibits the teaching of religion is schools, that type of idea is called intelligent design, with the unstated but implied understanding that the designer is God.

Many people through that ages have attributed to God the beauty and complexity of nature.

Einstein once posed to his assistant, Ernst Strauss the question ‘Did God have any choice when he created the universe?’

In the late 16th Century Kepler was convinced that God had created the universe according to some perfect mathematical principle. Newton showed that the same laws that apply in heavens apply on earth, and developed mathematical equations to express those laws that were so elegant they inspired almost religious further among many 18th century scientists who seemed intent on using them to show that God was a mathematician.

Ever since Newton and especially since Einstein the goal of physics has been to find simple mathematical principles (of the kind that Kepler envisaged,) and with them to create a unified Theory of Everything, that would account for every detail of the matter and forces we observe in nature.

In the late 19th and earlier 20th century, Maxwell and Einstein united the theories of electricity, magnetism, and light. In the 1970s the standard model was created, a single theory of string and weak nuclear forces and the electromagnetic force.

String Theory and M Theory then came into being in an attempt to include the remaining force ‘Gravity.’

The goal was to find not just a single theory that explains all the forces, but also one that explains the fundamental fine-tuning, such as the strength of the forces and the masses and charges of the elementary particles.

As Einstein put it, the hope was to be able to say that ‘Nature is so constituted that it is possible logically to lay down such strongly determined laws ,that within these laws only rationally completely determined constants occur. Not constants therefore who’s numerical value could be changed without destroying the Theory.’

A unique theory would be unlikely to have the fine-tuning that allows us to exist, but if in light of recent advances, we interpret Einstein’s dream to be that of a unique theory that explains this and other universes, with their whole spectrum of different laws, then M-Theory could be that theory! But is M-Theory unique, or demanded by any simple logical principle? Can we answer the question why M-Theory?’

11. Energy a conserved quantity, Black Holes, Einstein & M-Theory (pt3)

Steven Hawking – The Grand Design

M Systems: 9, 10, 11

30th May 2017

Chapter 8 pt3

Any set of laws that describes a continuous world, such as our own will have a concept of energy, which is a conserved quantity, meaning it doesn’t change in time. The energy of empty space will be a constant, independent of both time and position.

One can subtract out this constant vacuum energy by measuring the energy of any volume of space, relative of that of the same volume of empty space. So, we may as well call the constant zero.

One requirement any law of nature must satisfy is that it dictates that the energy of an isolated body surrounded by empty space is positive,

Which means that one has to do work to assemble the body. That’s because if the energy of an isolated body were negative, it could be created in a state of motion, so that its negative energy was exactly balanced by the positive energy due to its motion.

If that were true, there would be no reason that bodies could not appear anywhere and everywhere. Empty space would therefore be unstable, but if it costs energy to create an isolated body that could not …

Because, as we have said the energy of the universe, must remain constant. That is what it takes to make the universe locally stable, to make it so that things don’t just appear everywhere from nothing.

If the total energy of the universe must always remain zero, and it costs energy to make a body, how can a whole universe be created from nothing?

That is why there must be a law like gravity, because gravity is attractive, gravitational energy is negative, one has to do work to separate a gravitationally bound system, such as the earth and moon. This negative energy can balance the positive energy needed to create matter.

But it’s not quite that simple, the negative gravitational energy of the earth for example is less than a billionth of the positive energy of the matter particles the earth is made of. A body such as a star will have more negative gravitational energy and the smaller it is (the close the different parts of it are to each other), the greater this negative gravitational energy will be. But before it can become greater than the positive energy of the matter, the star will collapse to a black hole, and black holes have positive energy.

That’s why empty space is stable. Bodies such as starts and black holes cannot just appear out of nothing. But a whole universe can.

Because gravity shapes space and time, it allows space-time to be locally stable, but globally unstable. On the scale of the entire universe, the positive energy of the matter can be balanced by the negative gravitational energy, and so there is no restriction of the creation of whole universes,

because there is a law like gravity, the universe can and will create itself from nothing, in the manner described in chapter 6. Spontaneous creation is the reason why there is something rather than noting, why the universe exists, why we exist.

Why are the fundamental laws as we have described them? The ultimate theory must be consistent and must predict finite results for quantities that we can measure. We’ve seen that there must be a law like gravity, and we saw in chapter 5 that for a theory of gravity to predict finite quantities, the theory must have what is called supersymmetry between the forces of nature and the matter on which they act. M-Theory is the most general supersymmetric theory of gravity. For these reasons, M-Theory is the only candidate for a complete theory of the universe.

If it is finite (and this has yet to be proved) it will be a model of a universe that creates itself. We must be part of this universe because there is no other consistent model.

M-Theory was the unified theory Einstein was hoping to find.

The fact that we human beings, who are ourselves mere collections of fundamental particles of nature, have been able to come this close to an understanding of the laws governing us and our universe is a great triumph. But perhaps the true miracle is that abstract considerations of logic leads to a unique theory that predicts and describes a vast universe full of the amazing variety that we see.

If the theory is confirmed by observation, it will be the successful conclusion of a search going back more than three thousand years. We will have found the grand design.

12. Pythagoras the farther of theoretical physics and his Theory of Strings

M-Systems: 0 (The GGW String)

30th May 2017

Taken from the book ‘The Grand Design’

Part 1

Ignorance of nature’s way lead people in ancient times to invent Gods to lord over every aspect of human life. There were gods of love and war and the sun, earth, and sky, of the oceans and rivers, of rain and thunderstorm, even of earthquakes and volcanoes. When the gods were pleased mankind has been treated to good weather, peace and freedom from natural disasters and disease. When they were displeased, there came drought, war, pestilence and epidemics.

Since the connection of cause & effect in nature was invisible to their eyes, these gods appeared inscrutable and people at their mercy.

But with Thales of Miletus, circa 624 to 546BC, about 2600 years ago, that began to change. The idea arose that nature follows consistent principles that could be deciphered. And so, we began to the long process of replacing the notion of the reign of gods, with the concept of a universe that is governed by laws of nature, and created according to a blue print we could some pay learn to read.

According to Aristotle, circa 384 322BC it was about 500BC when Thales first came up with the idea that the word can be understood, that the complex happenings around us could be explained without leading to mythical or theological explanations.

Thales is credited with the first prediction of a solar eclipse in 585 BC. He was a shadowy figure who left behind no writings of his own. His home was one of the intellectual centres on a region called Ionia, which was colonised by the Greeks and asserted and influenced, that eventually lead from Turkey to as far as west Italy.

Ionian Science was an endeavour marked by a strong interest in uncovering fundamental laws to explain natural phenomena. A tremendous milestone in the history of ideas.

Their approach was rational which in many cases came to conclusions surprisingly similar to what our sophisticated method has lead us to believe today, it represented a grand beginning.

But over the centuries much of Ioannina would be forgotten, only to be rediscovered or reinvented, sometimes more than once.

According to legend, the first mathematical formulation of what we might today call a law of nature dates back to an Ionian named Pythagoras circa 580 to 490 BC, famous for the theorem named after him: that the square of the hypotenuse (longest side) of a right triangle equals the sum of the square of the other two sides.

Pythagoras is said to have discovered the numerical relationship between the length of the strings used in musical instruments and the harmonic combinations of the sounds. In today’s language, we would describe that relationship by saying that the frequency (the number of vibrations per second) of a string vibrating under fixed tension is inversely proportional to the length of the string. From a practical point of view, this explains why shorter guitar strings are at a higher pitch than longer ones.

There is evidence that there is some relation between string length and pitch and it was known in his day. If so, one could call that simple mathematical formula the first instance of what we now know as theoretical physics.

13. Hawking’s Mind of God, Branding & ‘I don’t believe that the ultimate theory will come by steady work along existing lines’

https://www.youtube.com/watch?v=Jzkm1E5RqJo

Black Holes and Baby Universes and Other Essays (1993)

M-Systems: Introduction

5th June 2016

In the last paragraph of your book, it says:

‘If we discover a complete theory of the universe, then it should in time be understandable in broad principles to everyone and not just to a few scientists.

And when that happens all of us will be able to discuss the why rather than the how.

If we find the answer to that, it would be the ultimate triumph of human reason, for then we would know the mind of God.’

Last lines. Hawking later wrote: “In the proof stage I nearly cut the last sentence in the book… Had I done so, the sales might have been halved.”

Other quotes from https://en.wikiquote.org/wiki/Stephen_Hawking

I don’t believe that the ultimate theory will come by steady work along existing lines. We need something new. We can’t predict what that will be or when we will find it because if we knew that, we would have found it already!

14. What is Reality the Matrix, Galileo, and Model Dependent Realism

M-Systems: 7, 8, 11, 12, 13, 14

10th June 2016

Simplicity is a matter of taste.

A few years ago, the city council on Monza, Italy, barred pet owners from keeping gold fish in curved goldfish bowls. The measure’s sponsor explains the measure in part by saying it is cruel to keep a fish in a bowl with curved sides because, gazing out, the fish would have a distorted view of reality.

But how do we know we have the true undistorted picture of reality? Might not we ourselves also be in some big gold fish bowl and have our vision distorted by and enormous lens? The goldfish’s view of reality is different from ours, but can we be sure it is less real? The goldfish view is not the same as our own, but goldfish could still formulate scientific laws governing the motion of the objects they observe outside their bowl.

For example, due to the distortion, a freely moving object, that we would observe to move in a straight line would be observed by the goldfish to move along a curved path. Nevertheless, the goldfish could formulate scientific laws from their distorted frame of reference that would always hold true and that would enable them to make predictions about the future motions of objects outside the bowl. Their laws would be more complicated that the laws in our frame, but simplicity is a matter of taste. If a goldfish formulated such a theory, we would have to admit the goldfish’s view is a valid reality.

A famous example of different pictures of reality is a model introduced around AD 150 by Ptolemy (circa AD 85 to AD 165) to describe the motion of the celestial bodies. Ptolemy published his work in a 13-book treatise usually known under its Arabic title, ‘Almagest.’

The Almagest begins by explaining reasons for thinking that earth is spherical, motionless, positioned at the centre of the universe and negligibly small in comparison to the distance of the heavens. Despite Aristarchus heliocentric model, these beliefs have been held by most educated Greeks, at least since the time of Aristotle, who believed for mystical reasons that the earth should be at the centre of the universe.

In Ptolemy’s model the earth stood still at the centre and the planets and the stars move around it in complicated orbits involving epicycles, like wheels on wheels.

This model seemed natural as we don’t feel the earth under our feet moving (except in earthquakes or moments of passion). Later European learning was based on the Greek sources that had been passed down, so that the ideas of Aristotle and Ptolemy became the basis for much of Western thought.

Ptolemy’s model of the cosmos was adopted by the Catholic church and held as official doctrine for 1400 years. It was not until 1543, that an alternative model was put forward by Copernicus in his book ‘De Revolutionibus Orbium Coelestium’ (On the Revolutions of the heavenly Spheres), published only in the year of his death (though he had worked on his theory for several decades).

Copernicus like Aristarchus some 17 centuries earlier, described a world where the sun was at rest and the planets revolved around it in circular orbits. Thought the idea was not new, its revival was met with passionate resistance. The Copernican model was held to contradict the Bible, which was interpreted as saying that the planets move around the earth, even though the Bible never clearly stated that. In fact, at the time the Bible was written people believed the earth was flat.

The ideas of Aristotle and Ptolemy became the basis for much of Western thought, Ptolemy’s model of the cosmos was adopted by the Catholic Church and held as official doctrine for 1400 years. It was not until 1543 that an alternative model was put forward by Copernicus in his book, the revolutions of the celestial spheres, published only in the year of his death.

Copernicus described a world at rest and the planets revolved around it in circular orbits. Thought the idea was not new its revival was met with passionate resistance as it was held to contradict the bible.

The Copernican model lead to a furious debate as to whether the earth was at rest culminating in Galileo’s trial for Heresy in 1633 for advocating the Copernican model and for thinking “that one may hold and defend as probable an opinion after it has been declared and defined contrary to the Holy Scripture.”

He was found guilty, confined to house arrest for the rest of his life, and forced to recant. He is said to have muttered under his breath “Eppur si muove” (but still it moves). In 1992 the Roman Catholic Church finally acknowledge that it had been wrong to condemn Galileo.

So which is real, the Ptolemaic or Copernican system? It is not uncommon for people to say that Copernicus proved Ptolemy wrong, that is not true. As one can use either picture as a model of the universe for our observations of the heavens can be explained by assuming either the earth or the sun to be at rest. Despite its role in philosophical debates over the nature of our universe, the real advantage of the Copernican system is simply that the equations of motion are much simpler in the frame of reference in which the sun is at rest.

A different kind of alterative reality occurs in the science fiction film ‘The Matrix,’ in which the human race is unknowingly living in a simulated virtual reality created by intelligent computers to keep them pacified and content while the computers suck their bio electrical energy (whatever that is).

Maybe this is not so far-fetched because many people prefer to spend their time in the simulated reality of websites such as Second Life. How do we know we are not just charters in a computer-generated soap opera? If we lived in a synthetic imaginary world, events would not necessarily have any logic or consistency or obey any laws.

The aliens in control might find it interesting or amusing to see our reaction, for example, if the full moon split in half, or everyone in the world on a diet got an uncontrollable craving for banana cream pie. But if the aliens did enforce consistent laws, there is no way we could tell that there was another reality behind the simulated one. It would be easy to call the world the aliens lived in the ‘real’ one and the synthetic world a ‘false’ one. But if -like us- the beings in the simulated world could not gaze into their universe from the outside, there would be no reason to doubt their own pictures of reality. This is a modern version of the idea that we are all figments of someone else’s dream.

These examples bring us to a conclusion that will be important in this book, that there is no picture- or theory- independent concept of reality. Instead we will adopt a view that we will call ‘model dependent realism,’ the idea that a physical theory or world picture is a model, generally of a mathematical nature and a set of rules that connect the elements of the model to observations. This provides a framework with which to interpret modern science.

15. Observation vs theory & Model dependent realism plus Gates Code & Holograms

The Grand Design – Chapter 3 What is Reality pt.2

10th June 2016

Philosophers from Plato onwards have argued for years about the nature of reality. Classical science is based on the belief that there exists a real external world whose properties are definite and independent of the observer who sees them.

According to classical science, certain objects exists and have physical properties, such as speed and mass, that are well-defined values. In this view our theories are attempts to describe those objects and their properties, and our measurements and perceptions correspond to them.

Both observer and observed are part of a world that has an objective existence, and any distinction between them has no meaningful significance. In other words,

“if you see a heard of Zebras fighting for a spot in the parking garage, it is because there really is a herd of Zebras fighting for a spot in the parking garage.”

All other observers who look will measure the same properties and the herd will have those properties whether anyone observes them or not.

In philosophy that belief is called realism.

Thought realism may be a tempting viewpoint, what we know about modern physics makes it a difficult one to defend. For example,

“according to the principles of quantum physics, which is an accurate description of nature, a particle has neither a definite position nor a definite velocity, unless and until those quantities are measured by an observer.”

It is therefore not correct to say that a measurement gives a certain result because the quantity being measured had that value at the time of the measurement.

In fact, in some cases individual objects don’t even have an independent existence, but rather exist only as part of an ensemble of many. And if a theory called the holographic principle proves correct, we and our 4-dimensional world maybe shadows on the boundary of a larger 5-dimensional space-time.

Strict realists often argue that the proof that scientific theories represent reality lies in their success. But different theories can successfully describe the same phenomenon trough disparate (different in kind) conceptual frameworks. In fact, many scientific theories that had proven successful were later replaced by other equally successful theories based on wholly new concepts of reality.

Traditionally those who did not accept realism have been called anti-realists. Anti-realists suppose a distinction between imperial knowledge and theoretical knowledge. They typically argue that observation and experiment are meaningful but that theories are no more than useful instruments that do not embody and deeper truths underlying the observed phenomena.

Some anti-realists have even wanted to restrict science to things that could be observed. For that reason, many in the 19th century rejected the idea of atoms on the grounds that we would never see one. George Berkley (1685 to 1753) even went as far as to say nothing exists except the mind and its ideas.

Philosopher David Hume 1711 to 1776 who wrote that although we have no rational grounds for believing in objective reality, we also have no choice but to act as if it is true.

Model-dependent realism short circuits all this argument and discussion between the realist and anti-realist school of thought.

According to model-dependent realism, it is pointless to ask whether a model is real, only whether it agrees with observation. If there are two models that both agree with observation, then one cannot say whether one is more real than another. One can use whatever model is more convenient in the situation under consideration.

We make models in science but we also make them in everyday life. Model-dependent realism applies not only to scientific models but also to the conscious and subconscious mental models we all create in order to interpret and understand the everyday world. There is no way to remove the observer ‘us’ from our perception of the world, which is created from our sensory processing and through the way we think and reason. Our perception and hence the observation on which our theories are based is not direct, but rather it is shaped by a kind of lens, the interpretive structure of our human brains.

Gates Code

Model-dependent realism corresponds to the way we perceive objects. In vision, one’s brain receives a series of signals down the optic nerve. Those signals do not constitute the sort of image you would accept on your television. There is a blind spot where the optic never attaches to the retina and the only part of your filed of vison with good resolution is a narrow area of about one degree of visual angle around the retinas centre, an area the width of your thumb held at arm’s length. And so, the raw data sent to the brain are like a badly pixelated picture with a whole in it. Fortunately, the human brain processes that data, combining the input from both eyes, (Like James Gates Error Blocking Browser Codes) filling in gaps in the assumption that the visual properties of neighbouring locations are similar and interpolating.

Moreover, it reads a two-dimensional array of data from the retina and creates from it the impression of three-dimensional space, the brain in other words builds a mental picture, or model.

The brain is so good at model building that if people are fitted with glasses that turn images upside down, their brains after a time, change the model so they can see things the right way up. If the glasses are then removed, they see the world upside down for a while, then again adapt.

This shows that what one means when one says ‘I see a chair’ is merely that one has used the light scattered by the chair to build a mental image or model of a chair. If the model is upside down, with luck one’s brain will correct it before one tries to sit on the chair.

Part 3

Another problem that model-dependent realism solves or at least avoids is the meaning of existence.

16. Economics is a ‘effective theory.’ Free will is not always rational

The Grand Design

M-Systems: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16

18th June 2016

Most laws of nature exist as a part of a larger interconnected system of laws.

In modern science laws of nature are usually phrased in mathematics. They can either be exact or approximate but they must have been observed to hold without exception, if not universally then at least under a stimulated set of conditions.

For example, we now know that Newton’s Laws must be modified if moving at velocities close to the speed of light. Yet we still consider Newton’s Laws because they hold, at least to a very good approximation for the conditions of the everyday world, in which the speeds we encounter are far below the speed of light.

While conceding, that human behaviour is indeed determined by the laws of nature, it also seems reasonable to conclude that the outcome is determined is such a complicated way and with so many variables as to make it impossible in practice to predict.

Because it is so impractical to use the underlying physical laws to predict human behaviour, we adopt what is called an ‘effective theory.’ In physics an effective theory is framework created to model certain observed phenomena without describing in detail all underlying processes.

For example, we cannot solve exactly the equations governing the gravitational interactions of every atom in a person’s body with every atom in the earth. But for practice purposes the gravitational force between a person and the earth can be described in terms of just a few numbers such as the person’s total mass.

Similarly, we cannot solve the equations governing the behaviour of complex atoms and molecules, but we have developed an effective theory called chemistry that provides an adequate explanation of how atoms and molecules behave in chemical reactions, without accounting for every detail of the interactions.

In the case of people, since we cannot solve the equations that determine our behaviour, we use the ‘effective theory’ that people have free will. The study of our will and of the behaviour that arises from it is the science of psychology.

Economics is also an effective theory, based on the notion of free will plus the assumptions that people evaluate their possible courses of actions and choose the best. That effective theory is only moderately successful in predicting behaviour because as we all know decisions are often not rational, or are based on a defective analysis of the consequence of the choice. That is why the world is in such a mess!

17. Model dependent realism and a network of theories called M-Theory (pt.4)

The Grand Design – Chapter 3, pt.5

M-Systems: Introduction, 12, 13 & 14

17th June 2016

In our quest to find the laws that govern the universe, we have formulated a number of theories or models.

Such as the four-element theory, the Ptolemaic model, the Big Bang Theory and so on.

With each or model our concepts of reality and of fundamental consistence of the universe change.

For example, consider the theory of light, Newton thought that light was made up of little particles. This would explain why light travels in straight lines.

However, early in the 20th century Einstein showed that the photoelectric effect, (now used in television and digital cameras) could be explained by a particle or quantum of light striking an atom and knocking out an electron. Thus, light behaves as both particle and wave.

The idea of particles was familiar from rocks, pebbles, and sand. But this wave particle duality, the idea that an object could be described as either a particle or a wave, is foreign to every day experience as is the idea that you can drink a chunk of sandstone.

Dualities like this, situations in which two very different theories accurately describe the same phenomenon are consistent with model-dependent realism. Each theory can describe and explain certain properties, and neither theory can be said to be better, or more real than the other.